A Metal Renderer in Swift Playgrounds (Part 9)

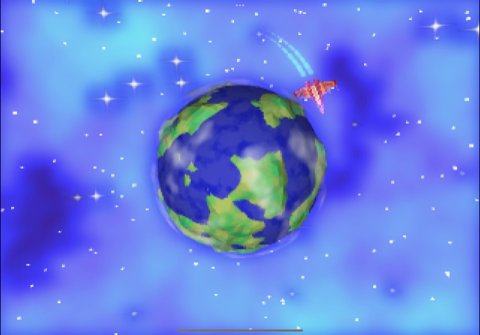

I’ve now added nice rendering features like bloom and planetary shadows, and was using noise to provide a nebula effect on the skybox. I had a ringed planet and a spaceship orbiting it, but I wanted to make the solar system feel more populated. One option was to create a space station or add other ships, but before I got to that I wanted to experiment further with noise for procedural generation, and see what I could do with a rocky planet. I imaginatively named the ringed, gas giant “Planet A” and set about creating a rocky, habitable “Planet B”.

Creating Planet B

When adding 3d noise, I tried offsetting Planet A’s vertices by random amounts which worked, but looked a bit strange on a gas giant. This would work very well for a rocky planet however, with the heights representing the tops of mountains, and the depths being the very bottom of the ocean. When generating icosahedra, I’m intentionally setting the normal for each vertex to the face vertex to enforce a low poly look. In order to stick with this approach, the offsets must be calculated as part of the mesh creation so that the correct face normal can be calculated. I extended the the icosahedron creation function to optionally add random offsets to the vertices based on the value noise. I created a subdivided icosahedron with random offsets using this to represent the planet’s surface. At this point it looked a bit like a potato floating in space.

I then added another subdivided icosahedron (this time without random offsets) to represent the ocean. I placed it at the same position as the surface, and tweaked its scale so that it was larger than the lowest points of the surface, and smaller than the highest points. I created custom fragment shaders for both, with the surface shader applying noise to mix between dark and light green, and the ocean to mix between light and dark blue. This resulted in something resembling a planet with an ocean.

Given this is stylised rather than realistic, I thought about ways to give the planet the appearance of a visible atmosphere. I am interested in adding a cel shading effect at a later point,(mostly out of curiosity than thinking it would work particular well) and a similar technique could be used to create an atmosphere “outline” around the planet. While thinking about it, the idea of having a layer of clouds came into my head. I was able to implement this cloud layer as a icosahedron using the same technique as the noise texture for the planet and ocean. The only difference was that instead of using noise to vary the colour, the the cloud icosahedron was completely white and the noise was used to vary the opacity. I also had to ensure that it was rendered last along with the rings as the transparency is order dependent.

Finally, I updated the spaceship to orbit Planet B. Then, to make the planet seem more alive, set the ocean and cloud icosahedra rotating at different slow speeds, giving the impression that they were both moving independently.

Tiny Solar System

There were now two planets and a star, but they were stationary, which is not how physics is supposed to work. I contemplated adding simulated gravity, but since I just want entirely predictable stable orbits, specifying the planets’ positions as a function of time is good enough. So each uniform update I updated the planet’s positions to “orbit” around the star at some distance. I created a smaller icosahedron representing a moon and set it to orbit planet B in same way, and just like that I had a tiny solar system! One cool aspect of this was seeing the planetary occlusion working, with the moon casting a shadow on Planet B, and Planet B casting a shadow on Planet A.

This worked really well, but I now had quite a lot of moving parts that were being individually updated in separate parts of the code. Instead of writing code to updated each planet’s position manually, I wanted to be able to create some animation paths for each planet up front and then apply them all when updating the uniforms.

In my design, an animation generates a matrix representing it at a point in time, and since it accepts time delta, is stateful so that it can remember how much time has passed. The animation is an interface, and I created implementations for rotation and translation animations, with the option for linear or smooth step transitions. For rotation I added the ability so specify an origin, and an optional offset so that it could be used for orbital purposes. Lastly I implemented some meta-animation types for sequencing one animation after another, running two animations concurrently, and repeating an animation a fixed amount of times, or forever.

In the past, I’ve experimented with many of the things I’ve implemented for this project, but animation is not one of them and I don’t really have any idea if my planned approach is a good one. Nonetheless, I replaced all the code that included motion with my animation system.

Jitter Bug

Throughout development my feedback loop has been to run the code through Playgrounds, and when I feel like significant progress has been made, I push a version to Test Flight and run the “built” version on my iPad. I also install it on my iPhone, but other than it running I haven’t been paying too much attention to it on other devices. On one occasion I noticed that on the iPhone, and only on the iPhone, the clouds of Planet B appeared to jump around from time to time. Similarly the rings of Planet A jumped around from time to time. More rarely, the spaceship moved erratically as well.

What followed was several weeks of trying to get to the bottom of the cause of the jitter (though obviously with my limited evening and weekend time). I had a few theories as to the cause that I spent time investigating:

- my new animation system

- elements with transparency

- rendering taking too long

- floating point precision

- iPhone specific issue

It was none of these things. When the application starts, I allocate space for uniforms for each frame in flight. Each frame can therefore use a separate buffer to write uniforms, knowing that nothing else is reading from the same buffer. Except I don’t, because my code was allocating a single buffer and using this across all frames. Correctly allocating space for three sets of uniforms fixed the issue.

As for why it disproportionately affected transparent objects, my theory is that it’s because these are drawn last because transparency is order dependent. The earlier an object is drawn, the less likely it is to have its uniforms overwritten by the next frame. And as for why it was an issue on my iPhone and not my iPad, my theory is that since my iPad is faster, the previous frame’s uniforms had already been read by the time they were overwritten.

It’s one of those scenarios where, while it was frustrating, it was also necessary so that I can learn from the painful lessons to inform my future approach in similar scenarios. In particular, considering draw order and focusing on code that relates to per-frame operations will be higher on my list of areas to investigate next time.